Even IT Companies Have IT Issues — Here's How We Handle Ours

A behind-the-scenes look at how we keep calm when technology doesn't cooperate.

There’s a quiet pressure that comes with working in technology: the assumption that you’ve personally solved every issue forever. That your laptop always behave, your tools always cooperate, and the universe never conspires against you ten minutes before a meeting starts.

The truth is more ordinary. We have IT issues too. A router decides to reset itself, a software update changes something nobody asked it to change, a headset gives out at exactly the wrong moment; in fact, our office printer is the bane of everyone’s existence. None of that is a failure of expertise. It’s just what working with technology looks like.

However, What separates a good IT operation from a frustrating one is whether or not everything that follows a problem feels predictable: you need a process.

Detection, classification, communication, resolution, closure — handled the same way every time, so the people affected aren’t also stuck managing the chaos.

Here’s how we think about it at inWorks LLC:

Classify before you communicate

The fastest way to turn a small problem into a big-feeling one is to communicate without context. So, before anyone sounds the alarms, we classify what we’re dealing with.

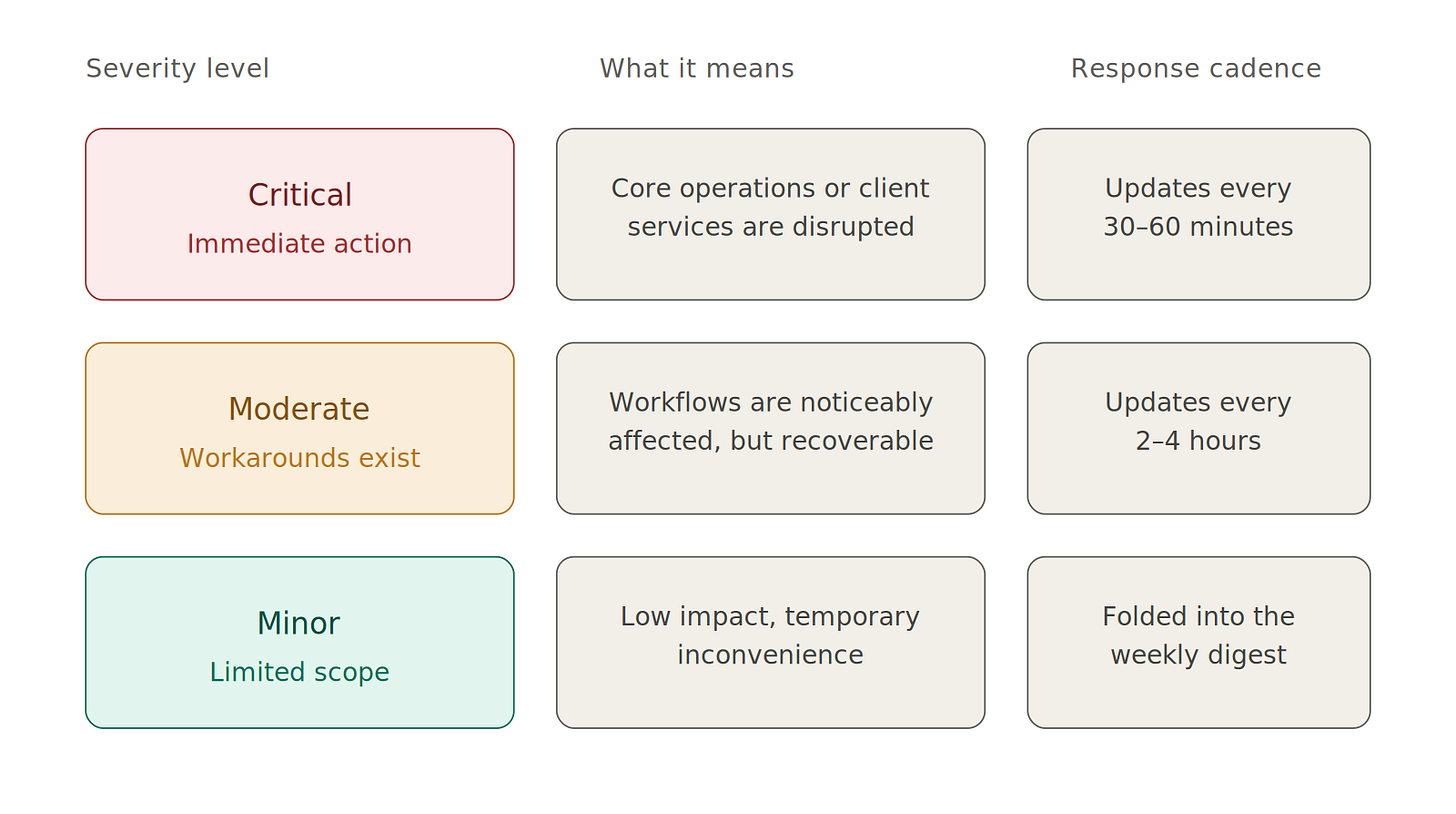

We use three levels:

Critical — core operations or client-facing services are disrupted and immediate action is required

Moderate — workflows are noticeably affected, but workarounds usually exist

Minor — limited scope, low impact, temporary inconvenience

Classification shapes everything that comes after it: how urgent the response is, who needs to know, which channels carry the message, and how often we update. Skip that step and every issue starts to sound the same, which is when people stop trusting the alerts entirely.

Triage before you react

Once something is classified, the next question is whether it actually needs a response right now, or whether right now just feels urgent because the message arrived during the workday.

A lot of teams confuse the two. They treat every notification like an emergency, exhaust their attention, and then have nothing left for the issue that actually warrants it.

Triage means asking a few specific questions.

Is this security-related? Is anyone fully blocked from doing their work? Is there a safe workaround in the meantime? Does this need a same-hour response, or same-day clarity?

The answers determine how the next hour gets spent. Most issues don’t need an immediate fix, and almost all of them benefit from a careful response over a reflexive one.

Make sure the issue lives somewhere real

If a problem only exists in someone’s memory, in a chat thread, or in a vague intention to follow up later, it’s already lost.

A few practices keep that from happening:

A ticketing record for support work, so every request has an owner and a status regardless of how it came in — voicemail, email, referral, or someone catching us in passing.

A shared internal workspace where action items, decisions, and parking-lot ideas all live in one place. We use Microsoft Loop for this, but the tool matters less than the principle: a single source of truth that survives the meeting where it was created.

Two weeks later, when someone asks what we decided about a recurring issue, there’s an actual answer to point to instead of a guess.

Communicate in the right order

When updates about an issue go out, sequence matters as much as content.

Internal first, external second (if necessary). Our intranetValet team gets briefed before anything publishes, with a description, time frame, and context — so anyone who fields a follow-up question already knows the situation. Drafts get reviewed against a template we maintain for this purpose, then go out through the channels appropriate to the impact level.

Critical issues are posted immediately on the intranet, on Substack, and by email. Moderate issues go out within two to four hours. Minor issues are folded into a weekly digest.

Substack is part of how we communicate operationally, not just how we publish ideas. If someone subscribes to hear how we think, we want them to hear from us when something is actively happening too.

Close the loop, and learn

The last phase is something a lot of teams skip.

When an issue is resolved, we mark it resolved across every channel where we posted about it, and we send a final summary. For Critical and Moderate issues, we run an internal debrief afterward — what happened, what we’d do differently, what’s worth baking into the next version of the process.

This is where reliability compounds over time. Fixing a single issue helps once. Improving the system that produced it helps every time something similar shows up later.

“No issues” is unrealistic — Shoot for “no confusion”

Technology is too complicated and too interdependent for any team to operate without incidents. The realistic goal is making sure incidents don’t generate confusion on top of themselves.

If something breaks at your organization tomorrow, the question worth asking is where the truth about it would live. If the honest answer is “in someone’s memory” or “scattered across three chat threads,” there isn’t a system supporting the most vulnerable part of your workflow.

That’s something we can help with.

inWorks LLC is a technology and systems company helping small businesses, creatives, and growing organizations build infrastructure they can actually use. Founded by Ilya Lehrman, inWorks has spent over 15 years thinking carefully about how technology should serve people — not the other way around.

📞 Call 267-857-8066 to start the conversation about how your organization handles incidents, communication, and the daily friction of technology that doesn’t cooperate.

For ongoing insights on current events in tech, cyber security, and protection best practices, follow inWorks LLC on LinkedIn for practical guidance designed for founders, operators, and leadership teams.